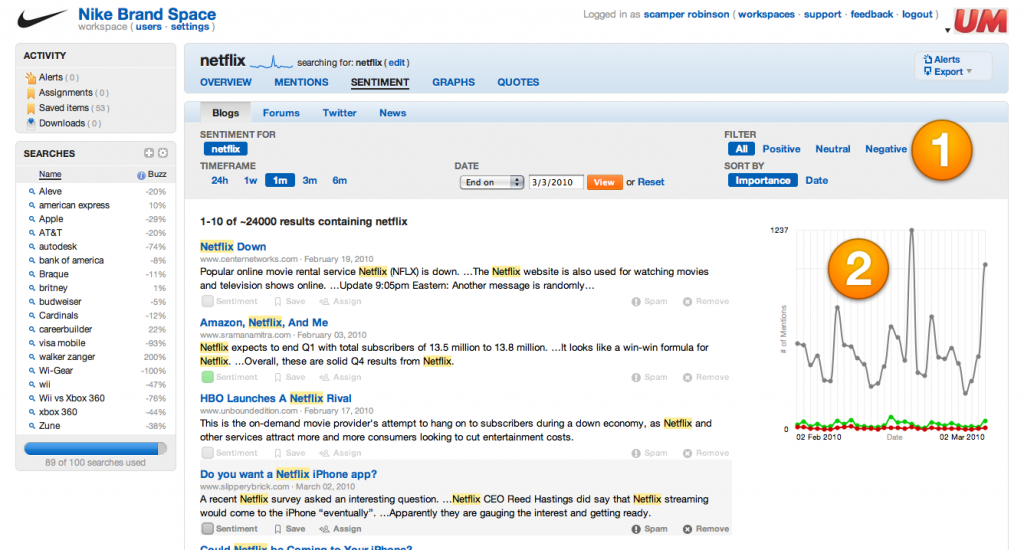

Increasingly, our everyday lives are influenced by computing algorithms that we cannot see or control.

This is the somewhat alarmist but nonetheless grounded in truth statement by Kevin Slavin in his recent TED talk (shown in the embedded player to the right). It’s not just financial markets, but movie scripts, book recommendations and advertising selections… the online and media world is increasing using software algorithms to tailor itself to what a mathematical equation thinks we want.

I find one of the most alarming examples is Facebook’s algorithm to determine what warrants “top news”. Effectively, Facebook is deciding for you which of your many friends’ updates is most important. And the implications of that are quite scary.. What if a friend thinks you are not listening because Facebook filtered out their update? Or what if you miss an opportunity for a future romantic involvement because Facebook hides a party update from what it thinks is someone you don’t care about?

Increasingly in the future we are going to have to think carefully about what decisions we allow software to make for us, and what things we should keep full control of ourselves.

Watch the TED video here or embedded above, or read the BBC News article for more information.

@

@

Tags:

Tags:

Like all images on the site, the topic icons are based on images used under Creative Commons or in the public domain. Originals can be found from the following links. Thanks to

Like all images on the site, the topic icons are based on images used under Creative Commons or in the public domain. Originals can be found from the following links. Thanks to